【機器學習2021】Transformer (上)

Summary

TLDRThis video script delves into the intricacies of Transformer models, particularly their application in various natural language processing and speech-related tasks. It covers the architecture of the Transformer encoder, explaining the role of self-attention, residual connections, and normalization layers. The versatility of sequence-to-sequence models is highlighted, showcasing their use in tasks like machine translation, speech recognition, grammar parsing, and multi-label classification. The script also touches on the potential of Transformer models in tackling unconventional problems and explores alternative design choices for optimizing their performance.

Takeaways

- 😃 The Transformer model is a powerful Sequence-to-Sequence (Seq2Seq) model used for various tasks like machine translation, speech recognition, and natural language processing (NLP).

- 🤖 The Transformer consists of an Encoder and a Decoder, where the Encoder processes the input sequence and the Decoder generates the output sequence.

- 🧠 The Encoder in the Transformer uses self-attention mechanisms to capture dependencies between words in the input sequence.

- 🔄 The Encoder has a residual connection that adds the input to the output of each block, followed by layer normalization.

- 💡 The original Transformer architecture is not necessarily the optimal design, and researchers continue to explore modifications like changing the order of operations or using different normalization techniques.

- 🌐 Seq2Seq models can be applied to various NLP tasks like question answering, sentiment analysis, and grammar parsing by representing the input and output as sequences.

- 🚀 End-to-end speech translation, where the model translates speech directly into text without intermediate transcription, is possible with Seq2Seq models.

- 🎤 Seq2Seq models can be used for tasks like text-to-speech synthesis, where the input is text, and the output is an audio waveform.

- 🔢 Multi-label classification problems, where an instance can belong to multiple classes, can be addressed using Seq2Seq models by allowing the model to output a variable-length sequence of class labels.

- 🌉 Object detection, a computer vision task, can also be tackled using Seq2Seq models by representing the output as a sequence of bounding boxes and class labels.

Q & A

What is a Transformer?

-A Transformer is a powerful sequence-to-sequence model widely used in natural language processing (NLP) and speech processing tasks. It uses self-attention mechanisms to capture long-range dependencies in input sequences.

What are some applications of sequence-to-sequence models discussed in the script?

-The script discusses several applications, including speech recognition, machine translation, chatbots, question answering, grammar parsing, multi-label classification, and object detection.

How does a Transformer Encoder work?

-The Transformer Encoder consists of multiple blocks, each containing a self-attention layer, a feed-forward network, and residual connections with layer normalization. The blocks process the input sequence and output a new sequence representation.

What is the purpose of residual connections in the Transformer architecture?

-Residual connections are used to add the input of a block to its output, allowing the model to learn residual mappings and potentially improve gradient flow during training.

Why is layer normalization used in the Transformer instead of batch normalization?

-Layer normalization is used instead of batch normalization because it operates on individual samples rather than mini-batches, which is more suitable for sequential data like text or speech.

What is the role of positional encoding in the Transformer?

-Positional encoding is used to inject information about the position of each token in the input sequence, as self-attention alone cannot capture the order of the sequence.

Can the Transformer architecture be improved or modified?

-Yes, the script mentions research papers that explore alternative designs for the Transformer, such as rearranging the layer normalization position or introducing a new normalization technique called Power Normalization.

What is the purpose of multi-head attention in the Transformer?

-Multi-head attention allows the model to attend to different representations of the input sequence in parallel, capturing different types of relationships and dependencies.

Can sequence-to-sequence models be applied to tasks beyond translation?

-Yes, the script mentions that sequence-to-sequence models can be applied to various NLP tasks, such as question answering, grammar parsing, multi-label classification, and even object detection in computer vision.

Why are sequence-to-sequence models useful for languages without written forms?

-Sequence-to-sequence models can be used for speech-to-text translation, allowing languages without written forms to be translated directly into written languages that can be read and understood.

Outlines

🤖 Introduction to Transformer and Seq2Seq Models

This paragraph introduces the topic of Transformer and its relation to BERT. It explains the concept of Sequence-to-Sequence (Seq2Seq) models, which can be used for various tasks such as speech recognition, machine translation, and speech translation. The paragraph provides examples of how Seq2Seq models can be applied to tasks like translating Taiwanese to Chinese and Taiwanese speech synthesis.

🗣️ Applications of Seq2Seq Models in Language Processing

This paragraph discusses the potential applications of Seq2Seq models in language processing tasks, such as training a chatbot, question answering, translation, summarization, and sentiment analysis. It explains how various NLP tasks can be framed as question-answering problems and solved using Seq2Seq models. The paragraph also mentions the limitations of using a single Seq2Seq model for all tasks and the need for task-specific models.

🌐 Versatility of Seq2Seq Models across NLP Tasks

This paragraph further explores the versatility of Seq2Seq models in solving various NLP tasks, including grammar parsing, multi-label classification, and object detection. It explains how even tasks that may not seem like Seq2Seq problems can be formulated as such and solved using these models. The paragraph also mentions the potential of using Seq2Seq models for end-to-end language tasks without intermediate steps.

🧩 Seq2Seq Models for Grammar Parsing and Classification

This paragraph delves into the application of Seq2Seq models for grammar parsing and multi-label classification tasks. It explains how a tree structure can be represented as a sequence and fed into a Seq2Seq model for grammar parsing. The paragraph also discusses how multi-label classification problems, where an instance can belong to multiple classes, can be solved using Seq2Seq models by allowing the model to output variable-length sequences.

🔍 Overview of Transformer Architecture

This paragraph provides an overview of the Transformer architecture, which is the focus of the lecture. It explains that the Transformer consists of an Encoder and a Decoder, and the Encoder's primary function is to take a sequence of vectors as input and output another sequence of vectors. The paragraph introduces the concept of self-attention and its role in the Encoder architecture.

🧱 Detailed Structure of Transformer Encoder

This paragraph goes into the detailed structure of the Transformer Encoder. It explains the various components and operations within each block of the Encoder, including multi-head self-attention, residual connections, layer normalization, and feed-forward networks. The paragraph also discusses the reasoning behind certain design choices and potential alternatives proposed in research papers.

🔄 Alternatives and Improvements to Transformer Architecture

This paragraph discusses potential alternatives and improvements to the original Transformer architecture. It mentions research papers that explore the positioning of layer normalization within the blocks and the use of different normalization techniques, such as Power Normalization, which may outperform layer normalization in certain scenarios. The paragraph encourages exploring and considering alternative architectures for optimal performance.

Mindmap

Keywords

💡Transformer

💡Sequence-to-sequence model (Seq2Seq)

💡Speech recognition

💡Machine translation

Highlights

Transformer is a sequence-to-sequence (seq2seq) model, which takes in a sequence as input and outputs another sequence, but the output length is determined by the machine itself.

Transformer can be applied to various tasks like speech recognition, machine translation, chatbots, question answering, grammar parsing, multi-label classification, and object detection, by treating the task as a seq2seq problem.

The concept of using seq2seq models for tasks like grammar parsing, which traditionally do not seem like seq2seq problems, was introduced in a 2014 paper titled "Grammar as a Foreign Language".

Transformer has an encoder and a decoder architecture, with the encoder processing the input sequence and the decoder determining the output sequence.

In the Transformer encoder, each block consists of a multi-head self-attention layer, followed by a residual connection and layer normalization, then a feed-forward network with residual connection and layer normalization.

The self-attention layer in the Transformer encoder considers the entire input sequence and outputs a vector for each position, allowing the model to capture long-range dependencies.

The residual connection in the Transformer encoder adds the input vector to the output vector, while layer normalization normalizes the vector across its dimensions.

The original Transformer architecture design may not be optimal, as suggested by research papers exploring alternative designs, such as changing the order of layer normalization and residual connections.

A paper titled "On Layer Normalization in the Transformer Architecture" proposes moving the layer normalization before the residual connection, improving performance.

Another paper, "Power Norm: Rethinking Batch Normalization In Transformers", suggests using power normalization instead of layer normalization, potentially improving performance.

The Transformer encoder processes the input sequence using positional encoding, as self-attention alone cannot capture the order of the sequence.

The Transformer decoder architecture, which determines the output sequence, is not covered in detail in this transcript.

Transformer models can be trained on large datasets, such as TV shows and movies for chatbots, or paired data like audio and transcripts for speech recognition and translation tasks.

The transcript provides an example of training a Transformer model on 1,500 hours of Taiwanese drama data to perform Taiwanese speech recognition and translation to Chinese text.

The transcript discusses the potential of using Transformer models for end-to-end speech translation, particularly for languages without written forms, by training on paired audio and text data from another language.

Transcripts

好 那接下來

我們要講這個作業五

大家會用上的Transformer

那我們在之前已經提了Transformer

提了不下N次

那如果你們還不知道

Transformer是什麼的話

Transformer其實就是變形金剛知道嗎

變形金剛的英文就是Transformer

那Transformer也跟我們之後會

提到的BERT有非常強烈的關係

所以這邊有一個BERT探出頭來

代表說Transformer跟BERT

是很有關係的

那Transformer是什麼呢

Transformer就是一個

Sequence-to-sequence的model

那Sequence-to-sequence的model

他的縮寫

我們會寫做Seq2seq

那Sequence-to-sequence的model

又是什麼呢

我們之前在講input a sequence的

case的時候

我們說input是一個sequence

那output有幾種可能

一種是input跟output的長度一樣

這個是在作業二的時候做的

有一個case是output指

output一個東西

這個是在作業四的時候做的

那接來作業五的case是

我們不知道應該要output多長

由機器自己決定output的長度

那有什麼樣的例子

有什麼樣的應用

是我們需要用到這種

Sequence-to-sequence的model

也就是input是一個sequence

output是一個sequence

但是我們不知道output應該有的長度

應該要由機器來自己決定

output的長度

有什麼樣的應用呢

舉例來說

一個很好的應用就是 語音辨識

在做語音辨識的時候

輸入是聲音訊號

我們在這一門課裡面

已經看過好多次

輸入的聲音訊號其實就是

一串的vector

輸出是什麼

輸出是語音辨識的結果

也就是輸入的這段聲音訊號

所對應的文字

我們這邊用圈圈來代表文字

每一個圈圈就代表

比如說中文裡面的一個方塊子

今天輸入跟輸出的長度

當然是有一些關係

但是卻沒有絕對的關係

我們說輸入的聲音訊號

他的長度是大T

我們並沒有辦法知道說

根據大T輸出的這個長度N

一定是多少

怎麼辦呢 由機器自己決定

由機器自己去聽這段聲音訊號的內容

自己決定他應該要輸出幾個文字

他輸出的語音辨識結果

輸出的句子裡面應該包含幾個字

由機器自己來決定

這個是語音辨識

還有很多其他的例子

比如說作業五我們會做機器翻譯

讓機器讀一個語言的句子

輸出另外一個語言的句子

那在做機器翻譯的時候

輸入的文字的長度是N

輸出的句子的長度是N'

那N跟N'之間的關係

也要由機器自己來決定

我們說輸入機器學習這個句子

輸出是machine learning

輸入是有四個字

輸出有兩個英文的詞彙

但是並不是所有中文跟英文的關係

都是輸出就是輸入的二分之一

到底輸入一段句子

輸出英文的句子要多長

由機器自己決定

甚至你可以做更複雜的問題

比如說做語音翻譯

什麼叫做語音翻譯

語音翻譯就是

你對機器說一句話

比如說machine learning

他輸出的不是英文

他直接把他聽到的英文的

聲音訊號翻譯成中文

你對他說machine learning

他輸出的是機器學習

你可能會問說

為什麼我們要做

Speech Translation這樣的任務

為什麼我們不說

我們直接做一個語音辨識

再做一個機器翻譯

把語音辨識系統跟機器翻譯系統

接起來 就直接是語音翻譯

那是因為其實世界上有很多語言

他根本連文字都沒有

世界上有超過七千種語言

那其實在這七千種語言

有超過半數其實是沒有文字的

對這些沒有文字的語言而言

你要做語音辨識

可能根本就沒有辦法

因為他沒有文字

所以你根本就沒有辦法做語音辨識

但我們有沒有可能對這些語言

做語音翻譯

直接把它翻譯成

我們有辦法閱讀的文字

一個很好的例子也許就是

台語的語音辨識

但我不會說台語沒有文字

很多人覺得台語是有文字的

但台語的文字並沒有那麼普及

現在聽說小學都有教台語的文字了

但台語的文字

並不是一般人能夠看得懂的

所以如果你做語音辨識

你給機器一段台語

然後它可能輸出是母湯

你根本就不知道

這段話在說什麼對不對

所以我們期待說機器也許可以做翻譯

做語音的翻譯

對它講一句台語

它直接輸出的是同樣意思的

中文的句子

那這樣一般人就可以看懂

那有沒有可能做到這件事呢

有沒有可能訓練一個類神經網路

這個類神經網路聽某一種語言

的聲音訊號

輸出是另外一種語言的文字呢

其實是有可能的

那對於台語這個例子而言

我們知道說

今天你要訓練一個neural network

你就需要有input跟output的配合

你需要有台語的聲音訊號

跟中文文字的對應關係

那這樣的資料好不好蒐集呢

這樣的資料

並不是沒有可能蒐集的

比如說YouTube上面

有很多的鄉土劇

你知道鄉土劇就是

台語語音 中文字幕

所以你只要它的台語語音載下來

中文字幕載下來

你就有台語聲音訊號

跟中文之間的對應關係

你就可以硬train一個模型

你就可以train我們剛才講的

我們等一下要講的Transformer

然後叫機器直接做台語的語音辨識

輸入台語 輸出中文

那你可能會覺得這個想法很狂

而且好像 聽起來有很多很多的問題

那我們實驗室就載了

一千五百個小時的鄉土劇的資料

然後 就真的拿來訓練一個

語音辨識系統

你可能會覺得說

這聽起來有很多的問題

舉例來說 鄉土劇有很多雜訊

有很多的音樂

不要管它這樣子

然後 鄉土劇的字幕

不一定跟聲音有對起來

就不要管它這樣子

然後呢你可能會想說

台語不是還有一些

比如說台羅拼音

台語也是有類似音標這種東西

也許我們可以先辨識成音標

當作一個中介

然後在從音標轉成中文

也沒有這樣做 直接訓練一個模型

輸入是聲音訊號

輸出直接就是中文的文字

這種沒有想太多 直接資料倒進去

就訓練一個模型的行為

就叫作硬train一發知道嗎

那你可能會想說

這樣子硬train一發到底能不能夠

做一個台語語音辨識系統呢

其實 還真的是有可能的

以下是一些真正的結果

機器在聽的一千五百個小時的

鄉土劇以後

你可以對它輸入一句台語

然後他就輸出一句中文的文字

以下是真正的例子

機器聽到的聲音是這樣子的

可以做一下台語的聽力測驗

看看你辨識出來的跟機器是不是一樣的

機器聽到這樣的句子

你的身體撐不住(台語)

那機器輸出是什麼呢

它的輸出是 你的身體撐不住

這個聲音訊號是你的身體撐不住(台語)

但機器並不是輸出無勘

而是它就輸出撐不住

或者是機器聽到的

是這樣的聲音訊號

沒事你為什麼要請假(台語)

沒事你為什麼要請假

機器聽到沒事(台語)

它並不是輸出 沒代沒誌

它是輸出 沒事

這樣聽到四個音節沒代沒誌(台語)

但它知道說台語的沒代沒誌(台語)

翻成中文 也許應該輸出 沒事

所以機器的輸出是

沒事你為什麼要請假

但機器其實也是蠻容易犯錯的

底下特別找機個犯錯的例子

給你聽一下

你聽聽這一段聲音訊號

不會膩嗎(台語)

他說不會膩嗎(台語)

我自己聽到的時候我覺得

我跟機器的答案是一樣的

就是說要生了嗎

但其實這句話

正確的答案就是

不會膩嗎(台語)

不會膩嗎

當然機器在倒裝

你知道有時候你從台語

轉成中文句子需要倒裝

在倒裝的部分感覺就沒有太學起來

舉例來說它聽到這樣的句子

我有跟廠長拜託(台語)

他說我有跟廠長拜託(台語)

那機器的輸出是

我有幫廠長拜託

但是你知道說這句話

其實是倒裝

我有跟廠長拜託(台語)

是我拜託廠長

但機器對於它來說

如果台語跟中文的關係需要倒裝的話

看起來學習起來還是有一點困難

這個例子想要告訴你說

直接台語聲音訊號轉繁體中文

不是沒有可能

是有可能可以做得到的

那其實台灣有很多人都在做

台語的語音辨識

如果你想要知道更多有關

台語語音辨識的事情的話

可以看一下下面這個網站

那台語語音辨識反過來

就是台語的語音合成對不對

我們如果是一個模型

輸入台語聲音 輸出中文的文字

那就是語音辨識

反過來 輸入文字 輸出聲音訊號

就是語音合成

這邊就是demo一下台語的語音合成

這個資料用的是

台灣 媠聲(台語)的資料

來找GOOGLE台灣媠聲(台語)

就可以找到這個資料集

裡面就是台語的聲音訊號

聽起來像是這個樣子

比如說你跟它說

歡迎來到台灣台大語音處理實驗室

不過這邊是需要跟大家說明一下

現在還沒有真的做End to End的模型

這邊模型還是分成兩階

他會先把中文的文字

轉成台語的台羅拼音

就像是台語的KK音標

在把台語的KK音標轉成聲音訊號

不過從台語的KK音標

轉成聲音訊號這一段

就是一個像是Transformer的network

其實是一個叫做echotron的model

它本質上就是一個Seq2Seq model

大概長的是這個樣子

所以你輸入文字

歡迎來到台大語音處理實驗室

機器的輸出是這個樣子的

歡迎來到台大(台語)

語音處理實驗室(台語)

或是你對他說這一句中文

然後他輸出的台語是這個樣子

最近肺炎真嚴重(台語)

要記得戴口罩 勤洗手(台語)

有病就要看醫生(台語)

所以你真的是可以

合出台語的聲音訊號的

就用我們在這一門課裡面學到的

Transformer或者是Seq2Seq的model

剛才講的是跟語音比較有關的

那在文字上

也會很廣泛的使用了Seq2Seq model

舉例來說你可以用Seq2Seq model

來訓練一個聊天機器人

聊天機器人就是你對它說一句話

它要給你一個回應

輸入輸出都是文字

文字就是一個vector Sequence

所以你完全可以用Seq2Seq 的model

來做一個聊天機器人

那怎麼訓練一個聊天機器人呢

你就要收集大量人的對話

像這種對話你可以收集

電視劇 電影的台詞 等等

你可以收集到

一堆人跟人之間的對話

假設在對話裡面有出現

某一個人說Hi

和另外一個人說

Hello How are you today

那你就可以教機器說

看到輸入是Hi

那你的輸出就要跟

Hello how are you today

越接近越好

那就可以訓練一個Seq2Seq model

那跟它說一句話

它就會給你一個回應

那事實上Seq2Seq model

在NLP的領域

在natural language processing的領域

的使用

是比你想像的更為廣泛

其實很多natural language processing的任務

都可以想成是question answering

QA的任務

怎麼說呢

所謂的Question Answering

就是給機器讀一段文字

然後你問機器一個問題

希望他可以給你一個正確的答案

很多你覺得跟question answering

沒什麼關係的任務

都可能可以想像成是QA

怎麼說呢 舉例來說

假設你今天想做的是翻譯

那機器讀的文章就是一個英文句子

問題是什麼 問題就是

這個句子的德文翻譯是什麼

然後輸出的答案就是德文

或者是你想要叫機器自動作摘要

摘要就是給機器讀一篇長的文章

叫他把長的文章的重點節錄出來

那你就是給機器一段文字

問題是這段文字的摘要是什麼

然後期待他可以輸出一個摘要

或者是你想要叫機器做

Sentiment analysis

什麼是Sentiment analysis呢

就是機器要自動判斷一個句子

是正面的還是負面的

像這樣子的應用在

假設你有做了一個產品

然後上線以後

你想要知道網友的評價

但是你又不可能一直

找人家ptt上面

把每一篇文章都讀過

所以怎麼辦

你就做一個Sentiment analysis model

看到有一篇文章裡面

有提到你的產品

然後就把這篇文章丟到

你的model裡面

去判斷這篇文章

是正面還是負面

怎麼把sentiment analysis這個問題

看成是QA的問題呢

你就給機器

你要判斷正面還負面的文章

你問題就是這個句子

是正面還是負面的

然後希望機器可以告訴你答案

所以各式各樣的NLP的問題

往往都可以看作是QA的問題

而QA的問題

就可以用Seq2Seq model來解

QA的問題怎麼用

Seq2Seq model來解呢

就是有一個Seq2Seq model輸入

就是有問題跟文章把它接在一起

輸出就是問題的答案

就結束了

你的問題加文章合起來

是一段很長的文字

答案是一段文字

Seq2Seq model只要是輸入一段文字

輸出一段文字

只要是輸入一個Sequence

輸出一個Sequence就可以解

所以你可以把QA的問題

硬是用Seq2Seq model解

叫它讀一篇文章讀一個問題

然後就直接輸出答案

所以各式各樣NLP的任務

其實都有機會使用Seq2Seq model

但是我這邊必須要強調一下

對多數NLP的任務

或對多數的語音相關的任務而言

往往為這些任務客製化模型

你會得到更好的結果

什麼問題都Seq2Seq model

就好像說你不管做什麼事情

都用瑞士刀一樣 對不對

瑞士刀可以做各式各樣的問題

砍柴也可以用瑞士刀

切菜也可以用瑞士刀

但是它不見得是一個最好用的

所以 如果你為各式各樣不同的任務

客製化各式各樣的模型

往往可以得到

比單用Seq2Seq model更好的結果

但是各個任務客製化的模型

就不是我們這一門課的重點了

如果你對人類語言處理

包括語音 包括自然語言處理

這些相關的任務有興趣的話呢

可以參考一下以下課程網頁的連結

就是去年上的深度學習

與人類語言處理

這門課的內容裡面就會教你

各式各樣的任務最好的模型

應該是什麼

舉例來說在做語音辨識

我們剛才講的是一個Seq2Seq model

輸入一段聲音訊號

直接輸出文字

今天啊 Google的 pixel4

Google官方告訴你說

Google pixel4也是用

N to N的Neural network

pixel4裡面就是

有一個Neural network

輸入聲音訊號

輸出就直接是文字

但他其實用的不是Seq2Seq model

他用的是一個叫做

RNN transducer的 model

像這些模型他就是為了

語音的某些特性所設計

這樣其實可以表現得更好

至於每一個任務

有什麼樣客製化的模型

這個就是另外一門課的主題

就不是我們今天想要探討的重點

那我剛才講了很多Seq2Seq model

在語音還有自然語言處理上的應用

其實有很多應用

你不覺得他是一個

Seq2Seq model的問題

但你都可以硬用

Seq2Seq model的問題硬解他

舉例來說文法剖析

文法剖析要做的事情就是

給機器一段文字

比如Deep learning is very powerful

機器要做的事情是產生

一個文法的剖析樹 告訴我們

deep加learning合起來

是一個名詞片語

very加powerful合起來

是一個形容詞片語

形容詞片語加is以後會變成

一個動詞片語

動詞片語加名詞片語合起來

是一個句子

那今天文法剖析要做的事情

就是產生這樣子的一個Syntactic tree

所以在文法剖析的任務裡面

假設你想要deep learning解的話

輸入是一段文字

他是一個Sequence

但輸出看起來不像是一個Sequence

輸出是一個樹狀的結構

但事實上一個樹狀的結構

可以硬是把他看作是一個Sequence

怎麼說呢

這個樹狀結構可以對應到一個

這樣子的Sequence

從這個Sequence裡面

你也可以看出

這個樹狀的結構有一個S

有一個左括號

有一個右括號

S裡面有一個noun phrase

有一個左括號跟右括號

NP裡面有一個左括號跟右括號

NP裡面有is

然後有這個形容詞片語

他有一個左括號右括號

這一個Sequence他就代表了

這一個tree 的structure

你先把tree 的structure

轉成一個Sequence以後

你就可以用Seq2Seq model硬解他

你就train一個Seq2Seq model

讀這個句子

然後直接輸入這一串文字

再把這串文字轉成一個樹狀的結構

你就可以硬是用Seq2Seq model

來做文法剖析這件事

這個概念聽起來非常的狂

但這是真的可以做得到的

你可以讀一篇文章叫做

grammar as a Foreign Language

這篇文章其實不是太新的文章

你會發現她放在arxiv上面的時間

是14年的年底

所以其實也是一個

上古神獸等級的文章

這篇文章問世的時候

那個時候Seq2Seq model還不流行

那時候Seq2Seq model

主要只有被用在翻譯上

所以這篇文章的title才會取說

grammar as a Foreign Language

他把文法剖析這件事情

當作是一個翻譯的問題

把文法當作是另外一種語言

直接套用當時人們認為

只能用在翻譯上的模型硬做

結果他得到state of the art的結果

我其實在國際會議的時候

有遇過這個第一作者Oriol Vlnyals

那個時候Seq2Seq model

還是個非常潮的東西

那個時候在我的認知裡面

我覺得這個模型

應該是挺難train的

我問他說

train Seq2Seq model有沒有什麼tips

沒想到你做個文法剖析

用Seq2Seq model

居然可以硬做到state of the art

這應該有什麼很厲害的tips吧

他說什麼沒有什麼tips

他說我連Adam都沒有用

我直接gradient descent

就train起來了

我第一次train就成功了

只是我要衝到state of the art

還是稍微調了一下參數而已

我也不知道是真的還假的啦

不過今天Seq2Seq model

真的是已經被很廣泛地

應用在各式各樣的應用上了

還有一些任務可以用seq2seq's model

舉例來說 multi-label的classification

什麼是multi-label的classification呢

這邊你要比較一下

multi-class的classification

跟multi-label的classification

multi-class的classification

跟multi-label的classification

聽起來名字很像

但他們其實是不一樣的事情

multi-class的classification意思是說

我們有不只一個class機器要做的事情

是從數個class裡面

選擇某一個class出來

但是multi-label的classification

意思是說同一個東西

它可以屬於多個 不只一個class

舉例來說 你在做文章分類的時候

可能這篇文章 屬於class 1跟3

這篇文章屬於class 3 9 17等等

你可能會說

這種multi-label classification的問題

要怎麼解呢 能不能直接把它當作一個

multi-class classification的問題來解

舉例來說

我把這些文章丟到一個class file裡面

本來class file只會輸出一個答案

輸出分數最高的那個答案

我現在就輸出分數最高的前三名

看看能不能解

multi-label的classification的問題

但這種方法可能是行不通的 為什麼

因為每一篇文章對應的class的數目

根本不一樣 有些東西 有些文章

對應的class的數目

是兩個 有的是一個 有的是三個

所以 如果你說 我直接取一個threshold

我直接取分數最高的前三名

class file output分數最高的前三名

來當作我的輸出 顯然

不一定能夠得到好的結果 那怎麼辦呢

這邊可以用seq2seq硬做 你知道嗎

輸入一篇文章 輸出就是class 就結束了

機器自己決定 它要輸出幾個class

我們說seq2seq model

就是由機器自己決定輸出幾個東西

輸出的output sequence的長度是多少

既然 你沒有辦法決定class的數目

怎麼辦 機器幫你決定 它自己決定

每篇文章 要屬於多少個class

或者是object detection

這個看起來跟seq2seq model

應該八竿子打不著的問題

它也可以用seq2seq's model硬解

object detection就是給機器一張圖片

然後它把圖片裡面的物件框出來

把它框出說 這個是斑馬 這個也是斑馬

但這種問題 可以用seq2seq's硬做

至於怎麼做 我們這邊就不細講

我在這邊放一個文獻

放一個連結給大家參考

講這麼多就是要告訴你說

seq2seq's model 它是一個

很powerful的model

它是一個很有用的model

我們現在就是要來學

怎麼做seq2seq這件事

一般的seq2seq's model

它裡面會分成兩塊 一塊是Encoder

另外一塊是Decoder

你input一個sequence有Encoder

負責處理這個sequence

再把處理好的結果丟給Decoder

由Decoder決定

它要輸出什麼樣的sequence

等一下 我們都還會再細講

Encoder跟 Decoder內部的架構

seq2seq's model的起源

其實非常的早 在14年的9月

就有一篇seq2seq's model

用在翻譯的文章 被放到Arxiv上

可以想像當時的seq2seq's model

看起來還是比較陽春的

今天講到seq2seq's model的時候

大家第一個會浮現在腦中的

可能都是我們今天的主角

也就是transformer

它有一個Encoder架構

有一個Decoder架構

它裡面有很多花花綠綠的block

等一下就會講一下

這裡面每一個花花綠綠的block

分別在做的事情是什麼

接下來 我們就來講Encoder的部分

seq2seq's model Encoder要做的事情

就是給一排向量輸出另外一排向量

給一排向量 輸出一排向量這件事情

很多模型都可以做到

可能第一個想到的是

我們剛剛講完的self-attention

其實不只self-attention

RNN CNN 其實也都能夠做到

input一排向量

output另外一個同樣長度的向量

在transformer裡面

transformer的Encoder

用的就是self-attention

這邊看起來有點複雜

我們用另外一張圖

來仔細地解釋一下

這個Encoder的架構

等一下再來跟原始的transformer的

論文裡面的圖進行比對

現在的Encoder裡面

會分成很多很多的block

每一個block都是輸入一排向量

輸出一排向量

你輸入一排向量 第一個block

第一個block輸出另外一排向量

再輸給另外一個block

到最後一個block

會輸出最終的vector sequence

每一個block 其實

並不是neural network的一層

這邊之所以不稱說

每一個block是一個layer

是因為每一個block裡面做的事情

是好幾個layer在做的事情

在transformer的Encoder裡面

每一個block做的事情

大概是這樣子的

先做一個self-attention

input一排vector以後

做self-attention

考慮整個sequence的資訊

Output另外一排vector.

接下來這一排vector

會再丟到fully connected的feed forward network裡面

再output另外一排vector

這一排vector就是block的輸出

事實上在原來的transformer裡面

它做的事情是更複雜的

實際上做的事情是這個樣子的

這是self-attention的layer

在我們剛才self-attention的時候

我們說 輸入一排vector

就輸出一排vector

這邊的每一個vector

它是考慮了 所有的input以後

所得到的結果 在transformer裡面

它加入了一個設計

是說 我們不只是輸出這個vector

我們還要把這個vector加上它的input

它要把input拉過來 直接加給輸出

得到新的output 也就是說

這邊假設這個vector叫做A

這個vector叫做B 你要把A B加起來

當作是新的輸出 這件事情

這樣子的network架構

叫做residual connection

那其實這種residual connection

在deep learning的領域用的是非常的廣泛

之後如果我們有時間的話

再來詳細介紹

為什麼要用residual connection

那你現在就先知道說 有一種connection

有一種network設計的架構

叫做residual connection

它會把input直接跟output加起來

得到新的vector

得到residual的結果以後

再把它做一件事情叫做normalization

這邊用的不是batch normalization

這邊用的叫做layer normalization

layer normalization做的事情

比bacth normalization更簡單一點

layer normalization做的事情

是這個樣子的

輸入一個向量 輸出另外一個向量

這邊不需要考慮batch

剛剛在講batch normalization的時候

需要考慮batch

那這邊這個layer normalization

不用考慮batch的資訊

輸入一個向量 輸出另外一個向量

這個layer normalization

做的事情是什麼呢

它會把輸入的這個向量

計算它的mean跟standard deviation

但是要注意一下 剛才在講

batch normalization的時候

我們是對同一個dimension

不同的feature

我們是對不同example

不同feature的同一個dimension

去計算mean跟standard deviation

但layer normalization

它是對同一個feature

同一個example裡面

不同的dimension

去計算mean跟standard deviation

計算出mean

跟standard deviation以後

就可以做一個normalize

output這邊的每一個vector

就是把原來input的vector

我發現這邊有一個bug

這個bug是什麼

這個bug就是這邊不需要 '

不好意思 記得把這個 '拿掉

這邊不需要 ' 我們把input vector

input 這個vector裡面每一個

dimension減掉m 減掉mean

再除以standard deviation以後得到x'

就是layer normalization的輸出

得到layer normalization的輸出以後

它的這個輸出 才是FC network的輸入

而FC network這邊

也有residual的架構

所以 我們會把FC network的input

跟它的output加起來 做一下residual

得到新的輸出 這個才是

transformer encoder裡面

一個block的輸出

這邊還有一件事情是漏講了

這個FC network做完residual以後

還不是結束 你要把residual的結果

再做一次layer normalization

這邊已經做過一次了

這邊還要再做一次 得到的輸出

才是residual network裡面

一個block的輸出

所以這個是挺複雜的

所以我們這邊講的 這一個圖

其實就是我們剛才講的那件事情

首先 你有self-attention

其實在input的地方

還有加上positional encoding

我們之前已經有講過

如果你只光用self-attention

你沒有未知的資訊

所以你需要加上positional的information

然後在這個圖上

有特別畫出positional的information

這一塊它這邊寫一個

Multi-Head Attention

這個就是self-attention的block

這邊有特別強調說

它是Multi-Head的self-attention

這邊有一個Add&norm是什麼意思

就是residual加layer normalization

我們剛才有說self-attention

有加上residual的connection

加下來還要過layer normalization

這邊這個圖上的Add&norm

就是residual加layer norm的意思

接下來 這邊要過

feed forward network

fc的feed forward network

以後再做一次Add&norm

再做一次residual加layer norm

才是一個block的輸出

然後這個block會重複n次

這個複雜的block

其實在之後會講到的

一個非常重要的模型BERT裡面

會再用到 BERT

它其實就是transformer的encoder

講到這邊 你心裡一定充滿了問號

就是為什麼 transformer的encoder

要這樣設計 不這樣設計行不行

行 不一定要這樣設計

這個encoder的network架構

現在設計的方式

我是按照原始的論文講給你聽的

但原始論文的設計 不代表它是最好的

最optimal的設計 舉例來說

有一篇文章叫

on layer normalization in the transformer architecture

它問的問題就是 為什麼

layer normalization是放在那個地方呢

為什麼我們是先做

residual再做layer normalization

能不能夠把layer normalization

放到每一個block的input

也就是說 你做residual以後

再做layer normalization

再加進去 你可以看到說左邊這個圖

是原始的transformer

右邊這個圖是稍微把block

更換一下順序以後的transformer

更換一下順序以後 結果是會比較好的

這就代表說

原始的transformer 的架構

並不是一個最optimal的設計

你永遠可以思考看看

有沒有更好的設計方式

再來還有一個問題就是

為什麼是layer norm 為什麼是別的

不是別的

為什麼不做batch normalization

也許這篇paper可以回答你的問題

這篇paper是Power Norm:

Rethinking Batch Normalization In Transformers

它首先告訴你說 為什麼

batch normalization不如

layer normalization

在Transformers裡面為什麼

batch normalization不如

layer normalization

接下來在說

它提出來一個power normalization

一聽就是很power的意思

都可以比layer normalization

還要performance差不多或甚至好一點

4.7 / 5 (35 votes)

【人工智能】万字通俗讲解大语言模型内部运行原理 | LLM | 词向量 | Transformer | 注意力机制 | 前馈网络 | 反向传播 | 心智理论

Why & When You Should Use Claude 3 Over ChatGPT

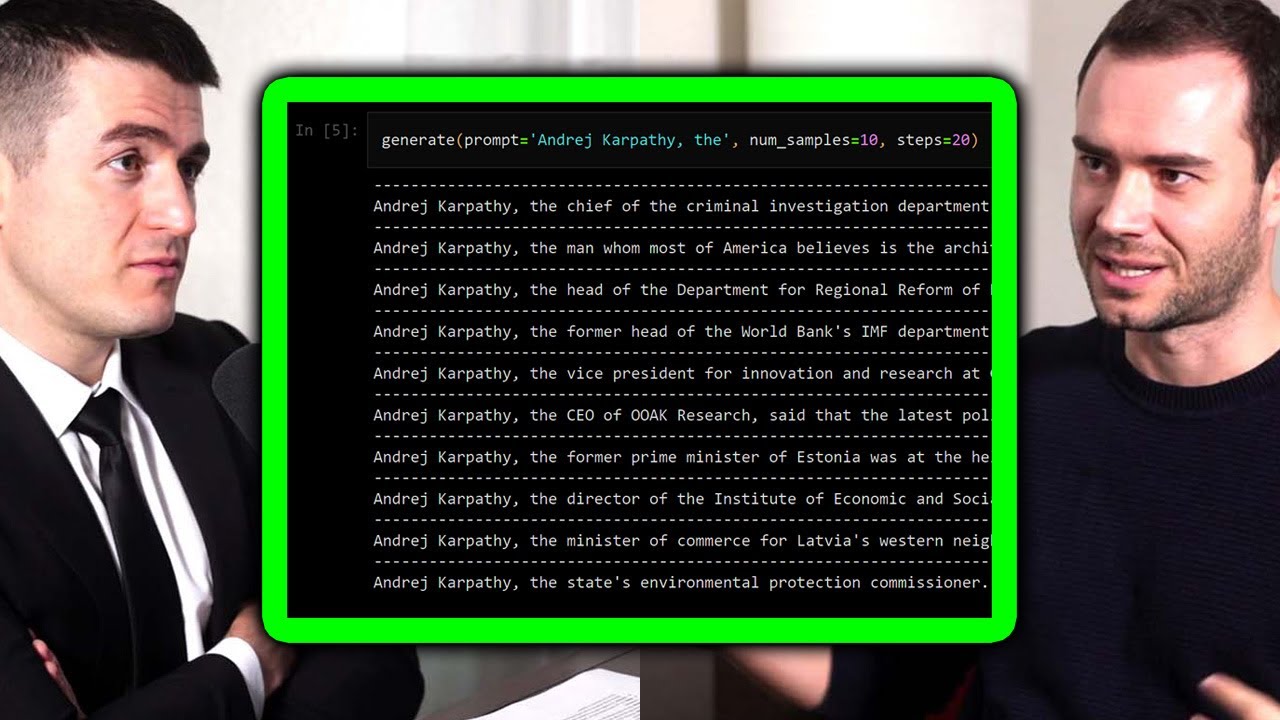

Transformers: The best idea in AI | Andrej Karpathy and Lex Fridman

Our Favorite Productivity Apps!

The M3 MacBook Air "Problems"

Simple Introduction to Large Language Models (LLMs)